Writing analytics and the LAK15 “State of the Field” panel

We just concluded an outstandingly run LAK15 conference hosted expertly by Marist College, Poughkeepsie NY.

In the closing panel, a few of us shared thoughts on the state of the field.

International LA expert panel with @sbuckshum rep Australia @dgasevic UK @hthwaite Canada @stephteasley US pic.twitter.com/lyAHciNjnu

— Shane Dawson (@shaned07) March 20, 2015

The replay will join all the other LAK talks on the SoLAR YouTube channel eventually. Meantime, here’re my extended notes+links, and in particular, a summary of how Writing Analytics has emerged @LAK.

Analytics in Oz

![]() Of course after nearly 8 months in Sydney I’m eminently qualified to talk about the state of play in Australia 😉

Of course after nearly 8 months in Sydney I’m eminently qualified to talk about the state of play in Australia 😉

Luckily, more authoritative accounts come from two national projects funded by the Office of Learning & Teaching, analysing the current state of learning analytics in higher education, as summarised in the LAK15 Poster:

- The first project led by University of South Australia has conducted a dual qualitative/quantitative analysis of the strategic rationale for analytics, as perceived by university administrators and researchers, and a framework for advancement.

[LAK15 Poster Abstract][Poster PDF] - A second project led by Charles Darwin University complements this with a focus on how academics ‘in the trenches’ perceive analytics for retention. They will share their findings next month at a symposium…

In addition, a few months ago I hosted the Australian Learning Analytics Summer Institute which attracted 100 delegates, and gives a feel for current activities.

The emergence of Academic Writing Analytics @LAK

We were invited to comment on what struck us at LAK. Firstly, the record-breaking 300 (?) delegate numbers, especially ~100 newcomers (right?), was very heartening. Also, the serious level of sponsorship from companies reflects the rise of the field and the growth of the marketplace. In a far-from-systematic analysis of LAK trends, like Prog Chair Agathe Merceron in her opening welcome, plus several others, I’ll pick out Writing Analytics as theme of the year.

Writing is the primary window onto learners’ minds for educational assessment in most disciplines. Just as students learn to “show their working” in maths to demonstrate how they reach a solution, in the medium of language, they must make their thinking visible, leading the reader through the chain of reasoning through the appropriate use of linguistic constructions that are the hallmarks of educated, scholarly writing. In that sense, it might be surprising that Writing Analytics is only this year highly visible, and I’m not sure what the explanation is. Perhaps it is simply a question of it taking a few years for a new field to connect one-by-one with key people in the relevant tributaries that flow into it. At LAK11 there were no writing analytics papers, with just six from LAK12-14, including two DCLA workshop papers [1-6]. (Slight tangent: the LAK Data Challenge has up till now been a scientometrics challenge, and represents a different kind of writing analytics — for researchers.)

We convened the pre-conference workshops on Discourse-Centric Learning Analytics (DCLA13; DCLA14) in order to bring in key researchers in fields such as CSCL and AIED. In a similar vein, this year LAK invited Danielle McNamara, SoLET Lab Director at Arizona State University, as a keynote speaker.

This put writing analytics on the radar for many delegates, making them aware of different classes of writing support tool, and the capacity of text analysis to give insight into the cognition and emotions of student writers. The ASU team had a strong presence in LAK15 papers/demo [7-11], also strengthening the relevance of LAK to the K-12 community. In addition, there were papers spanning very different technologies, and genres of writing, from Queensland University of Technology (adults, reflective writing), and The Open University (adults, masters-level essays) [12-13].

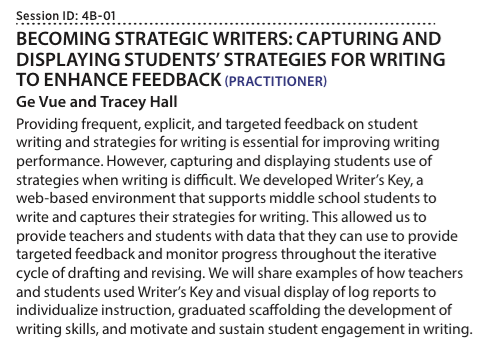

In addition, the team at CAST gave a practitioner report on their Writing Key web app, a lower-tech approach which structures K6 children’s writing with strong templates and cues, rather than using NLP to extract structure from free text. Here’s the abstract since it’s not in the proceedings:

Interestingly, the fact that they ran out of time to embed visualisations in the tool meant they hand-generated prototype views in Tableau, in order to elicit reactions. A simple bubble chart showing the most frequent words used proved to be most useful.

Not surprisingly, automated essay grading evokes strong reactions from many people. In my own mind, a more appropriate strategy to pursue (at UTS:CIC) is to place a lesser burden on the technology, and also avoid any suggestion that skilled readers can be automated out of their jobs. Right now, students receive zero feedback on drafts — there simply isn’t the human capacity to do so except in exclusive educational settings with extremely low teacher-student ratios. Yet we know that timely, personalised, formative feedback on work-in-progress is about the most powerful way to build learner confidence and skill. So the use case that I (and UTS academics) find most compelling is that of students checking their draft texts. We’ve gone from spell-checkers, to grammar checkers, to readability checkers, to plagiarism checkers. Next step in evolution: academic writing checkers.

Moving on from writing, I made a few general comments on the Who? How? What? Why? of the learning analytics field.

WHO + HOW? From pretty UIs to radically participatory design

In her comments, Stephanie Teasley drew our attention to an article in ACM Interactions, the magazine for the Human-Centred Informatics community. The Big Hole in HCI Research proposes this framework as a way to reflect on the field:

Also coming from an HCI background, I’m obviously a fan of user interfaces that are a delight to use. But HCI had an early identity crisis, after we got branded as “the guys to bring in (normally very late) who will make it pretty” — that’s just insulting to the user (and HCI designers!) — if ‘it’ (the system functionality) is completely unfit for purpose in more profound respects.

Learning Analytics needs to learn from history, skip this naïve baby step and move directly to what we now know about design. Analytics design must be profoundly participatory and experiential in approach, with real stakeholder engagement, through prototypes that can fail early and often via rapid learning loops. This inevitably shifts power away from those who wield it in traditional systems design, but it’s the only way to mould new kinds of tools that can embed into an organisation — which will itself adapt in response to the new feedback loops that analytics sets up.

WHAT+WHY? What kinds of learners are we trying to create, and why?

Learning Analytics are never neutral: every approach reinforces a particular way of teaching and learning — the learning activities it’s designed to track; the data it consumes; the patterns it models; the assumptions about who the end-users are; the interventions it’s designed to enable; the timescale of that cycle — all are grounded within the epistemology, pedagogy and assessment triangle.

We get excited about what analytics can measure that old assessment technology could not. So let’s ensure we escape from the classic trap for conventional assessment@scale. Let’s invent analytics that measure what we really value, not value merely what can be easily measured. Moreover, we know from research into information infrastructure (e.g. Sorting Things Out) that new, highly visible “technology” gradually sinks below conscious attention into the everyday infrastructure. So let’s make sure we’re reinforcing the foundations of a building fit for purpose in these complex times.

So for instance, most teachers need to teach within the current education system, and most young people need to perform in it to the best of their ability. There’s remains an important role for analytics that accelerate performance in high stakes schools exams, and perhaps these will even release time in the over-packed curriculum for more authentic forms of learning and assessment.

If you want to make a living from analytics, your product must sell to people who want to buy now. This does however mean that most products will tend to have shorter time horizons, and will in effect be turbocharging the current system.

Our job as the LAK community is not only to foster a healthy dialogue and collaborative relationships with vendors, but also look further and see the bigger picture. The academy has this luxury, and responsibility. Most thought leaders in education makes us acutely aware of the limitations of current teaching and assessment for ‘the age of complexity’. As a field we must help effect the shift to more authentic learning, by learners better equipped to tackle extreme socio-technical complexity.

Are we building analytics for the schools and university of 2015, or are we helping to design the fabric for 2025? A lot of people need evidence to buy into new models of learning: educators, policymakers, parents, learners themselves. Our calling is to create the new tools, which will establish the new kind of evidence base that helps make new learning paradigms credible.

Writing Analytics @LAK Conference: bibliography

- McNely, B. J., P. Gestwicki, J. H. Hill, P. Parli-Horne and E. Johnson (2012). Learning analytics for collaborative writing: a prototype and case study. Proceedings of the 2nd International Conference on Learning Analytics and Knowledge, Vancouver, British Columbia, Canada, ACM. http://dx.doi.org/10.1145/2330601.2330654

- Lárusson, J. A. and B. White (2012). Monitoring student progress through their written “point of originality”. Proceedings of the 2nd International Conference on Learning Analytics and Knowledge, Vancouver, British Columbia, Canada, ACM. http://dx.doi.org/10.1145/2330601.2330653

- Simsek, D., S. Buckingham Shum, Á. Sándor, A. De Liddo and R. Ferguson (2013). XIP Dashboard: Visual Analytics from Automated Rhetorical Parsing of Scientific Metadiscourse. 1st International Workshop on Discourse-Centric Learning Analytics, at 3rd International Conference on Learning Analytics & Knowledge, Leuven, BE (Apr. 8-12, 2013). Open Access Eprint: http://oro.open.ac.uk/37391

- Southavilay, V., K. Yacef, P. Reimann and R. A. Calvo (2013). Analysis of collaborative writing processes using revision maps and probabilistic topic models. Proceedings of the Third International Conference on Learning Analytics and Knowledge, Leuven, Belgium, ACM. http://dx.doi.org/10.1145/2460296.2460307

- Whitelock, D., D. Field, J. T. E. Richardson, N. V. Labeke and S. Pulman (2014). Designing and Testing Visual Representations of Draft Essays for Higher Education Students. 2nd International Workshop on Discourse-Centric Learning Analytics, Fourth International Conference on Learning Analytics and Knowledge, Indianapolis, Indiana, USA. https://dcla14.files.wordpress.com/2014/03/dcla14_whitelock_etal.pdf

- Simsek, D., S. Buckingham Shum, A. D. Liddo, R. Ferguson and Á. Sándor (2014). Visual analytics of academic writing. Proceedings of the Fourth International Conference on Learning Analytics And Knowledge, Indianapolis, Indiana, USA, ACM. http://dx.doi.org/10.1145/2567574.2567577

- Snow, E. L., L. K. Allen, M. E. Jacovina, C. A. Perret and D. S. McNamara (2015). You’ve got style: detecting writing flexibility across time. Proceedings of the Fifth International Conference on Learning Analytics And Knowledge, Poughkeepsie, New York, ACM. http://dx.doi.org/10.1145/2723576.2723592

- Dascalu, M., S. Trausan-Matu, P. Dessus and D. S. McNamara (2015). Discourse cohesion: a signature of collaboration. Proceedings of the Fifth International Conference on Learning Analytics And Knowledge, Poughkeepsie, New York, ACM. http://dx.doi.org/10.1145/2723576.2723578

- Crossley, S., L. K. Allen, E. L. Snow and D. S. McNamara (2015). Pssst… textual features… there is more to automatic essay scoring than just you! Proceedings of the Fifth International Conference on Learning Analytics And Knowledge, Poughkeepsie, New York, ACM. http://dx.doi.org/10.1145/2723576.2723595

- Allen, L. K., E. L. Snow and D. S. McNamara (2015). Are you reading my mind?: modeling students’ reading comprehension skills with natural language processing techniques. Proceedings of the Fifth International Conference on Learning Analytics And Knowledge, Poughkeepsie, New York, ACM. http://dx.doi.org/10.1145/2723576.2723617

- LAK15 Demo: ReaderBench: An Integrated Tool Supporting Both Individual And Collaborative Learning. Mihai Dascalu, Lucia Larise Stavarche, Stefan Trausan-Matu, Phillipe Dessus, Bianco Maryse and Danielle McNamara [pdf] (See also this book chapter)

- Simsek, D., Á. Sándor, S. Buckingham Shum, R. Ferguson, A. D. Liddo and D. Whitelock (2015). Correlations between automated rhetorical analysis and tutors’ grades on student essays. Proceedings of the Fifth International Conference on Learning Analytics And Knowledge, Poughkeepsie, New York, ACM. http://dx.doi.org/10.1145/2723576.2723603. Open Access Eprint: http://oro.open.ac.uk/42042

- Gibson, A. and K. Kitto (2015). Analysing reflective text for learning analytics: an approach using anomaly recontextualisation. Proceedings of the Fifth International Conference on Learning Analytics And Knowledge, Poughkeepsie, New York, ACM. http://dx.doi.org/10.1145/2723576.2723635

Great to see some thinking beyond disciplinary silos, and the combination of educational and computational thinking. I am off to read about your traingle of epistemology, pedagogy and assessment and take this conversation into the assessment scholarship field. Thanks!

Jul 10th, 2015 at 4:28 am

[…] Natural Language Processing moves out of the labs and into mainstream products, and as it becomes a mainstream topic in the Learning Analytics community, we have the opportunity and challenge of harnessing language […]

Jul 22nd, 2015 at 6:54 am

[…] Natural Language Processing moves out of the labs and into mainstream products, and as it becomes a mainstream topic in the Learning Analytics community, we have the opportunity and challenge of harnessing language […]

Jul 28th, 2015 at 2:08 am

[…] Tweet […]

Jan 18th, 2016 at 8:00 am

[…] Source: SBS Blog Link: Writing analytics and the LAK15 “State of the Field” panel […]

Jan 19th, 2016 at 5:31 am

[…] Natural Language Processing moves out of the labs and into mainstream products, and as it becomes a mainstream topic in the Learning Analytics community, we have the opportunity and challenge of harnessing language […]

Jun 16th, 2020 at 11:36 pm

[…] 2015 CIC’s director Simon Buckingham Shum blogged about the emergence of what he dubbed “Writing Analytics” as a stream within the wider Learning Analytics field. Five years on, we have co-chaired regular […]